This is a fixed-text formatted version of a Jupyter notebook

You can contribute with your own notebooks in this GitHub repository.

Source files: event_sampling.ipynb | event_sampling.py

Event Sampling¶

Prerequisites¶

To understand how to generate a Model and a MapDataset, and how to fit the data, please refer to the gammapy.modeling.models.SkyModel and simulate_3d.

Context¶

This tutorial describes how to sample events from an observation of a one (or more) gamma-ray source(s). The main aim of the tutorial will be to set the minimal configuration needed to deal with the Gammapy event-sampler and how to obtain an output photon event list.

The core of the event sampling lies into the Gammapy gammapy.datasets.MapDatasetEventSampler class, which is based on the inverse cumulative distribution function (Inverse CDF).

The gammapy.datasets.MapDatasetEventSampler takes in input a gammapy.datasets.Dataset object containing the spectral, spatial and temporal properties of the source(s) of interest.

The gammapy.datasets.MapDatasetEventSampler class evaluates the map of predicted counts (npred) per bin of the given Sky model, and the npred map is then used to sample the events. In particular, the output of the event-sampler will be a set of events having information about their true coordinates, true energies and times of arrival.

To these events, IRF corrections (i.e. PSF and energy dispersion) can also further applied in order to obtain reconstructed coordinates and energies of the sampled events.

At the end of this process, you will obtain an event-list in FITS format.

Objective¶

Describe the process of sampling events from a given Sky model and obtaining an output event-list.

Proposed approach¶

In this section, we will show how to define a gammapy.data.Observations and to create a gammapy.datasets.Dataset object (for more info on gammapy.datasets.Dataset objects, please visit this link). These are both necessary for the event sampling. Then, we will define the Sky model from which we sample events.

In this tutorial, we propose two examples for sampling events: one chosing a point-like source and one using a template map.

Setup¶

As usual, let’s start with some general imports…

[1]:

%matplotlib inline

[2]:

import matplotlib.pyplot as plt

from pathlib import Path

import numpy as np

import copy

import astropy.units as u

from astropy.io import fits

from astropy.coordinates import SkyCoord

from gammapy.data import DataStore, GTI, Observation

from gammapy.datasets import MapDataset, MapDatasetEventSampler

from gammapy.maps import MapAxis, WcsGeom, Map

from gammapy.irf import load_cta_irfs

from gammapy.makers import MapDatasetMaker

from gammapy.modeling import Fit

from gammapy.modeling.models import (

Model,

Models,

SkyModel,

PowerLawSpectralModel,

PointSpatialModel,

GaussianSpatialModel,

SkyDiffuseCube,

)

from regions import CircleSkyRegion

Define an Observation¶

You can firstly create a gammapy.data.Observations object that contains the pointing position, the GTIs and the IRF you want to consider.

Hereafter, we chose the IRF of the South configuration used for the CTA DC1 and we set the pointing position of the simulated field at the Galactic Center. We also fix the exposure time to 1 hr.

Let’s start with some initial settings:

[3]:

IRF_FILE = (

"$GAMMAPY_DATA/cta-1dc/caldb/data/cta/1dc/bcf/South_z20_50h/irf_file.fits"

)

POINTING = SkyCoord(0.0, 0.0, frame="galactic", unit="deg")

LIVETIME = 1 * u.hr

Now you can create the observation:

[4]:

irfs = load_cta_irfs(IRF_FILE)

observation = Observation.create(

obs_id=1001, pointing=POINTING, livetime=LIVETIME, irfs=irfs

)

Define the MapDataset¶

Let’s generate the gammapy.datasets.Dataset object: we define the energy axes (true and reconstruncted), the migration axis and the geometry of the observation.

This is a crucial point for the correct configuration of the event sampler. Indeed the spatial and energetic binning should be treaten carefully and… the finer the better. For this reason, we suggest to define the energy axes by setting a minimum binning of least 10-20 bins per decade for all the sources of interest. The spatial binning may instead be different from source to source and, at first order, it should be adopted a binning significantly smaller than the expected source size.

For the examples that will be shown hereafter, we set the geometry of the dataset to a field of view of 2degx2deg and we bin the spatial map with pixels of 0.02 deg.

[5]:

ENERGY_AXIS = MapAxis.from_energy_bounds(

"0.1 TeV", "100 TeV", nbin=10, per_decade=True

)

ENERGY_AXIS_TRUE = MapAxis.from_energy_bounds(

"0.03 TeV", "300 TeV", nbin=20, per_decade=True, name="energy_true"

)

MIGRA_AXIS = MapAxis.from_bounds(

0.5, 2, nbin=150, node_type="edges", name="migra"

)

WCS_GEOM = WcsGeom.create(

skydir=POINTING,

width=(2, 2),

binsz=0.02,

frame="galactic",

axes=[ENERGY_AXIS],

)

In the following, the dataset is created by selecting the effective area, background model, the PSF and the Edisp from the IRF. The dataset thus produced can be saved into a FITS file just using the write() function. We put it into the evt_sampling sub-folder:

[6]:

%%time

empty = MapDataset.create(

WCS_GEOM, energy_axis_true=ENERGY_AXIS_TRUE, migra_axis=MIGRA_AXIS

)

maker = MapDatasetMaker(selection=["exposure", "background", "psf", "edisp"])

dataset = maker.run(empty, observation)

Path("event_sampling").mkdir(exist_ok=True)

dataset.write("./event_sampling/dataset.fits", overwrite=True)

CPU times: user 1.49 s, sys: 510 ms, total: 2 s

Wall time: 2.03 s

Define the Sky model: a point-like source¶

Now let’s define a Sky model (see how to create it here) for a point-like source centered 0.5 deg far from the Galactic Center and with a power-law spectrum. We then save the model into a yaml file.

[7]:

spectral_model_pwl = PowerLawSpectralModel(

index=2, amplitude="1e-12 TeV-1 cm-2 s-1", reference="1 TeV"

)

spatial_model_point = PointSpatialModel(

lon_0="0 deg", lat_0="0.5 deg", frame="galactic"

)

sky_model_pntpwl = SkyModel(

spectral_model=spectral_model_pwl,

spatial_model=spatial_model_point,

name="point-pwl",

)

models_pntpwl = Models([sky_model_pntpwl])

file_model = "./event_sampling/point-pwl.yaml"

models_pntpwl.write(file_model, overwrite=True)

Sampling the source and background events¶

Now, we can finally add the gammapy.modeling.models.SkyModel we want to event-sample to the gammapy.datasets.Dataset container:

[8]:

dataset.models.extend(models_pntpwl)

print(dataset.models)

Models

Component 0: BackgroundModel

Name : 5x1qxXyj-bkg

Datasets names : ['5x1qxXyj']

Parameters:

norm : 1.000

tilt (frozen) : 0.000

reference (frozen) : 1.000 TeV

Component 1: SkyModel

Name : point-pwl

Datasets names : None

Spectral model type : PowerLawSpectralModel

Spatial model type : PointSpatialModel

Temporal model type : None

Parameters:

index : 2.000

amplitude : 1.00e-12 1 / (cm2 s TeV)

reference (frozen) : 1.000 TeV

lon_0 : 0.000 deg

lat_0 : 0.500 deg

The next step shows how to sample the events with the gammapy.datasets.MapDatasetEventSampler class. The class requests a random number seed generator (that we set with random_state=0), the gammapy.datasets.Dataset and the gammapy.data.Observations object. From the latter, the gammapy.datasets.MapDatasetEventSampler class takes all the meta data information.

[9]:

%%time

sampler = MapDatasetEventSampler(random_state=0)

events = sampler.run(dataset, observation)

CPU times: user 1.08 s, sys: 96.6 ms, total: 1.17 s

Wall time: 1.18 s

The output of the event-sampler is an event list with coordinates, energies and time of arrivals of the source and background events. Source and background events are flagged by the MC_ID identifier (where 0 is the default identifier for the background).

[10]:

print(f"Source events: {(events.table['MC_ID'] == 2).sum()}")

print(f"Background events: {(events.table['MC_ID'] == 0).sum()}")

Source events: 178

Background events: 11804

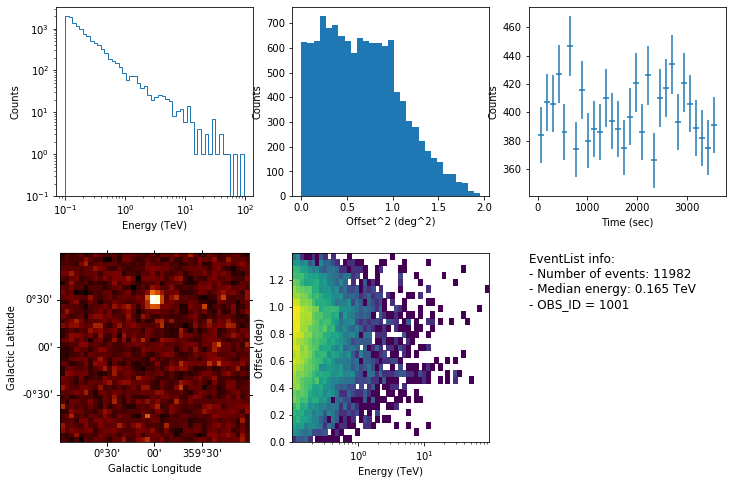

We can inspect the properties of the simulated events as follows:

[11]:

events.peek()

By default, the gammapy.datasets.MapDatasetEventSampler fills the metadata keyword OBJECT in the event list using the first model of the SkyModel object. You can change it with the following commands:

[12]:

events.table.meta["OBJECT"] = dataset.models[1].name

Let’s write the event list and its GTI extension to a FITS file. We make use of fits library in astropy:

[13]:

primary_hdu = fits.PrimaryHDU()

hdu_evt = fits.BinTableHDU(events.table)

hdu_gti = fits.BinTableHDU(dataset.gti.table, name="GTI")

hdu_all = fits.HDUList([primary_hdu, hdu_evt, hdu_gti])

hdu_all.writeto("./event_sampling/events_0001.fits", overwrite=True)

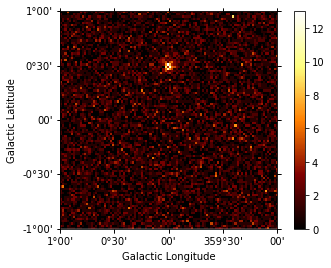

Generate a skymap¶

A skymap of the simulated events can be obtained with:

[14]:

counts = Map.create(

frame="galactic", skydir=(0, 0.0), binsz=0.02, npix=(100, 100)

)

counts.fill_events(events)

counts.plot(add_cbar=True);

Fit the simulated data¶

We can now check the sake of the event sampling by fitting the data (a tutorial of source fitting is here and here). We make use of the same gammapy.modeling.models.Models adopted for the simulation. Hence, we firstly read the gammapy.datasets.Dataset and the model file, and we fill the gammapy.datasets.Dataset with the sampled events.

[15]:

dataset = MapDataset.read("./event_sampling/dataset.fits")

models_sim_point = Models.read("./event_sampling/point-pwl.yaml")

counts = Map.from_geom(WCS_GEOM)

counts.fill_events(events)

dataset.counts = counts

dataset.models.extend(models_sim_point)

Let’s fit the data and look at the results:

[16]:

%%time

fit = Fit([dataset])

result = fit.run(optimize_opts={"print_level": 1})

print(result)

------------------------------------------------------------------

| FCN = 7.137E+04 | Ncalls=100 (100 total) |

| EDM = 2.26E-05 (Goal: 1E-05) | up = 1.0 |

------------------------------------------------------------------

| Valid Min. | Valid Param. | Above EDM | Reached call limit |

------------------------------------------------------------------

| True | True | False | False |

------------------------------------------------------------------

| Hesse failed | Has cov. | Accurate | Pos. def. | Forced |

------------------------------------------------------------------

| False | True | True | True | False |

------------------------------------------------------------------

OptimizeResult

backend : minuit

method : minuit

success : True

message : Optimization terminated successfully.

nfev : 100

total stat : 71371.11

CPU times: user 13.2 s, sys: 601 ms, total: 13.8 s

Wall time: 13.9 s

[17]:

result.parameters.to_table()

[17]:

| name | value | unit | min | max | frozen | error |

|---|---|---|---|---|---|---|

| str9 | float64 | str14 | float64 | float64 | bool | float64 |

| norm | 9.894e-01 | 0.000e+00 | nan | False | 9.154e-03 | |

| tilt | 0.000e+00 | nan | nan | True | 0.000e+00 | |

| reference | 1.000e+00 | TeV | nan | nan | True | 0.000e+00 |

| index | 1.998e+00 | nan | nan | False | 6.349e-02 | |

| amplitude | 1.042e-12 | cm-2 s-1 TeV-1 | nan | nan | False | 9.926e-14 |

| reference | 1.000e+00 | TeV | nan | nan | True | 0.000e+00 |

| lon_0 | 1.272e-03 | deg | nan | nan | False | 3.447e-03 |

| lat_0 | 5.022e-01 | deg | -9.000e+01 | 9.000e+01 | False | 3.424e-03 |

The results looks great!

Extended source using a template¶

The event sampler can also work with a template model. Here we use the interstellar emission model map of the Fermi 3FHL, which can be found in the GAMMAPY data repository.

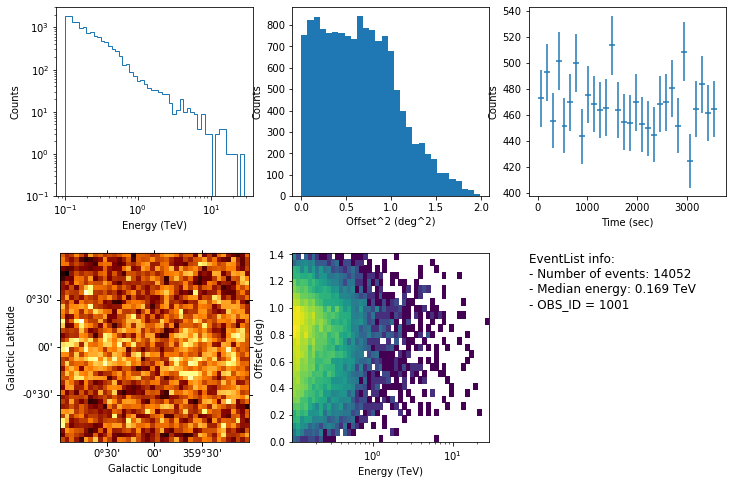

We proceed following the same steps showed above and we finally have a look at the event’s properties:

[18]:

diffuse = SkyDiffuseCube.read(

"$GAMMAPY_DATA/fermi-3fhl-gc/gll_iem_v06_gc.fits.gz"

)

models_diffuse = Models([diffuse])

file_model = "./event_sampling/diffuse.yaml"

models_diffuse.write(file_model, overwrite=True)

[19]:

dataset = MapDataset.read("./event_sampling/dataset.fits")

dataset.models.extend(models_diffuse)

print(dataset.models)

Models

Component 0: BackgroundModel

Name : avWdQV1s-bkg

Datasets names : ['avWdQV1s']

Parameters:

norm : 1.000

tilt (frozen) : 0.000

reference (frozen) : 1.000 TeV

Component 1: SkyDiffuseCube

Name : gll_iem_v06_gc.fits

Datasets names : None

Parameters:

norm : 1.000

tilt (frozen) : 0.000

reference (frozen) : 1.000 TeV

[20]:

%%time

sampler = MapDatasetEventSampler(random_state=0)

events = sampler.run(dataset, observation)

CPU times: user 2.66 s, sys: 494 ms, total: 3.15 s

Wall time: 3.16 s

[21]:

events.peek()

Simulate mutiple event list¶

In some user case, you may want to sample events from a number of observations. In this section, we show how to simulate a set of event lists. For simplicity we consider only one point-like source, observed three times for 1 hr and assuming the same pointing position.

Let’s firstly define the time start and the livetime of each observation:

[22]:

tstarts = [1, 5, 7] * u.hr

livetimes = [1, 1, 1] * u.hr

[23]:

for idx, tstart in enumerate(tstarts):

observation = Observation.create(

obs_id=idx,

pointing=POINTING,

tstart=tstart,

livetime=livetimes[idx],

irfs=irfs,

)

dataset = maker.run(empty, observation)

dataset.models.extend(models_pntpwl)

sampler = MapDatasetEventSampler(random_state=idx)

events = sampler.run(dataset, observation)

events.table.write(

f"./event_sampling/events_{idx:04d}.fits", overwrite=True

)

You can now load the event list with Datastore.from_events_files() and make your own analysis following the instructions in the `analysis_2 <analysis_2.ipynb>`__ tutorial.

[24]:

path = Path("./event_sampling/")

paths = list(path.rglob("events*.fits"))

data_store = DataStore.from_events_files(paths)

data_store.obs_table

[24]:

| OBS_ID | RA_PNT | DEC_PNT | GLON_PNT | GLAT_PNT | ZEN_PNT | ALT_PNT | AZ_PNT | ONTIME | LIVETIME | DEADC | TSTART | TSTOP | DATE-OBS | TIME-OBS | DATE-END | TIME-END | N_TELS | OBJECT | TELESCOP | CALDB | IRF | EVENTS_FILENAME | EVENT_COUNT |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| deg | deg | deg | deg | deg | deg | deg | s | s | s | s | |||||||||||||

| int64 | float64 | float64 | float64 | float64 | float64 | str6 | str5 | float64 | float64 | float64 | float64 | float64 | str13 | str13 | str13 | str13 | str1 | str9 | str3 | str3 | str12 | str31 | int64 |

| 2 | 266.4049882865447 | -28.93617776179147 | 0.0 | 4.4527765540489235e-14 | 70.0 | 20.000 | 0.000 | 3600.0 | 3600.0 | 0.0 | 25199.99999979045 | 28800.00000020955 | NOT AVAILABLE | NOT AVAILABLE | NOT AVAILABLE | NOT AVAILABLE | point-pwl | CTA | 1dc | South_z20_50 | event_sampling/events_0002.fits | 12232 | |

| 0 | 266.4049882865447 | -28.93617776179147 | 0.0 | 4.4527765540489235e-14 | 70.0 | 20.000 | 0.000 | 3600.0 | 3600.0 | 0.0 | 3599.999999790452 | 7200.000000209548 | NOT AVAILABLE | NOT AVAILABLE | NOT AVAILABLE | NOT AVAILABLE | point-pwl | CTA | 1dc | South_z20_50 | event_sampling/events_0000.fits | 11982 | |

| 1 | 266.4049882865447 | -28.93617776179147 | 0.0 | 4.4527765540489235e-14 | 70.0 | 20.000 | 0.000 | 3600.0 | 3600.0 | 0.0 | 18000.00000020955 | 21600.0 | NOT AVAILABLE | NOT AVAILABLE | NOT AVAILABLE | NOT AVAILABLE | point-pwl | CTA | 1dc | South_z20_50 | event_sampling/events_0001.fits | 12171 |

Exercises¶

Try to sample events for an extended source (e.g. a radial gaussian morphology);

Change the spatial model and the spectrum of the simulated Sky model;

Include a temporal model in the simulation