This is a fixed-text formatted version of a Jupyter notebook

- Try online

- You can contribute with your own notebooks in this GitHub repository.

- Source files: spectrum_analysis.ipynb | spectrum_analysis.py

Spectral analysis with Gammapy¶

Introduction¶

This notebook explains in detail how to use the classes in gammapy.spectrum and related ones.

Based on a datasets of 4 Crab observations with H.E.S.S. (simulated events for now) we will perform a full region based spectral analysis, i.e. extracting source and background counts from certain regions, and fitting them using the forward-folding approach. We will use the following classes

Data handling:

- gammapy.data.DataStore

- gammapy.data.DataStoreObservation

- gammapy.data.ObservationStats

- gammapy.data.ObservationSummary

To extract the 1-dim spectral information:

To perform the joint fit:

- gammapy.spectrum.SpectrumDatasetOnOff

- gammapy.modeling.models.PowerLawSpectralModel

- gammapy.modeling.models.ExpCutoffPowerLawSpectralModel

- gammapy.modeling.models.LogParabolaSpectralModel

To compute flux points (a.k.a. “SED” = “spectral energy distribution”)

Feedback welcome!

Setup¶

As usual, we’ll start with some setup …

[1]:

%matplotlib inline

import matplotlib.pyplot as plt

[2]:

# Check package versions

import gammapy

import numpy as np

import astropy

import regions

print("gammapy:", gammapy.__version__)

print("numpy:", np.__version__)

print("astropy", astropy.__version__)

print("regions", regions.__version__)

gammapy: 0.14

numpy: 1.17.2

astropy 3.2.1

regions 0.4

[3]:

import astropy.units as u

from astropy.coordinates import SkyCoord, Angle

from regions import CircleSkyRegion

from gammapy.maps import Map

from gammapy.modeling import Fit, Datasets

from gammapy.data import ObservationStats, ObservationSummary, DataStore

from gammapy.modeling.models import (

PowerLawSpectralModel,

create_crab_spectral_model,

)

from gammapy.spectrum import (

SpectrumExtraction,

FluxPointsEstimator,

FluxPointsDataset,

ReflectedRegionsBackgroundEstimator,

)

Load Data¶

First, we select and load some H.E.S.S. observations of the Crab nebula (simulated events for now).

We will access the events, effective area, energy dispersion, livetime and PSF for containement correction.

[4]:

datastore = DataStore.from_dir("$GAMMAPY_DATA/hess-dl3-dr1/")

obs_ids = [23523, 23526, 23559, 23592]

observations = datastore.get_observations(obs_ids)

Define Target Region¶

The next step is to define a signal extraction region, also known as on region. In the simplest case this is just a CircleSkyRegion, but here we will use the Target class in gammapy that is useful for book-keeping if you run several analysis in a script.

[5]:

target_position = SkyCoord(ra=83.63, dec=22.01, unit="deg", frame="icrs")

on_region_radius = Angle("0.11 deg")

on_region = CircleSkyRegion(center=target_position, radius=on_region_radius)

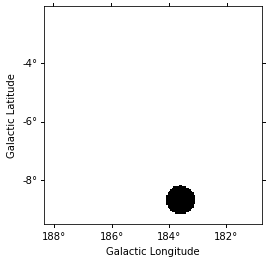

Create exclusion mask¶

We will use the reflected regions method to place off regions to estimate the background level in the on region. To make sure the off regions don’t contain gamma-ray emission, we create an exclusion mask.

Using http://gamma-sky.net/ we find that there’s only one known gamma-ray source near the Crab nebula: the AGN called RGB J0521+212 at GLON = 183.604 deg and GLAT = -8.708 deg.

[6]:

exclusion_region = CircleSkyRegion(

center=SkyCoord(183.604, -8.708, unit="deg", frame="galactic"),

radius=0.5 * u.deg,

)

skydir = target_position.galactic

exclusion_mask = Map.create(

npix=(150, 150), binsz=0.05, skydir=skydir, proj="TAN", coordsys="GAL"

)

mask = exclusion_mask.geom.region_mask([exclusion_region], inside=False)

exclusion_mask.data = mask

exclusion_mask.plot();

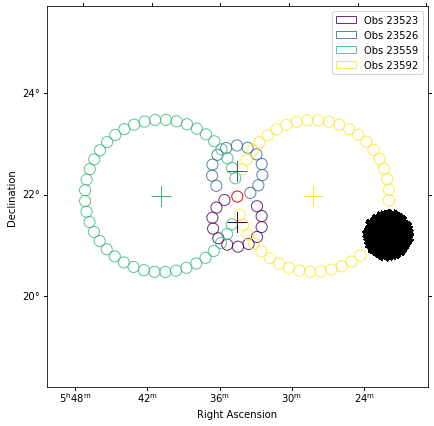

Estimate background¶

Next we will manually perform a background estimate by placing reflected regions around the pointing position and looking at the source statistics. This will result in a gammapy.spectrum.BackgroundEstimate that serves as input for other classes in gammapy.

[7]:

background_estimator = ReflectedRegionsBackgroundEstimator(

observations=observations,

on_region=on_region,

exclusion_mask=exclusion_mask,

)

background_estimator.run()

[8]:

plt.figure(figsize=(8, 8))

background_estimator.plot(add_legend=True);

<Figure size 576x576 with 0 Axes>

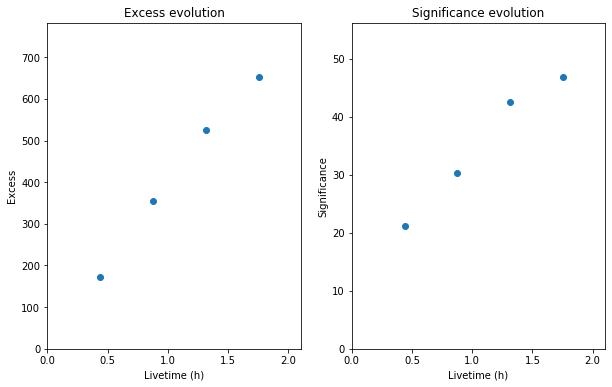

Source statistic¶

Next we’re going to look at the overall source statistics in our signal region. For more info about what debug plots you can create check out the ObservationSummary class.

[9]:

stats = []

for obs, bkg in zip(observations, background_estimator.result):

stats.append(ObservationStats.from_observation(obs, bkg))

print(stats[1])

obs_summary = ObservationSummary(stats)

fig = plt.figure(figsize=(10, 6))

ax1 = fig.add_subplot(121)

obs_summary.plot_excess_vs_livetime(ax=ax1)

ax2 = fig.add_subplot(122)

obs_summary.plot_significance_vs_livetime(ax=ax2);

*** Observation summary report ***

Observation Id: 23526

Livetime: 0.437 h

On events: 201

Off events: 228

Alpha: 0.083

Bkg events in On region: 19.00

Excess: 182.00

Excess / Background: 9.58

Gamma rate: 6.94 1 / min

Bkg rate: 0.72 1 / min

Sigma: 21.79

Extract spectrum¶

Now, we’re going to extract a spectrum using the SpectrumExtraction class. We provide the reconstructed energy binning we want to use. It is expected to be a Quantity with unit energy, i.e. an array with an energy unit. We also provide the true energy binning to use.

[10]:

e_reco = np.logspace(-1, np.log10(40), 40) * u.TeV

e_true = np.logspace(np.log10(0.05), 2, 200) * u.TeV

Instantiate a SpectrumExtraction object that will do the extraction. The containment_correction parameter is there to allow for PSF leakage correction if one is working with full enclosure IRFs. We also compute a threshold energy and store the result in OGIP compliant files (pha, rmf, arf). This last step might be omitted though.

[11]:

extraction = SpectrumExtraction(

observations=observations,

bkg_estimate=background_estimator.result,

containment_correction=False,

e_reco=e_reco,

e_true=e_true,

)

[12]:

%%time

extraction.run()

CPU times: user 1.34 s, sys: 17.6 ms, total: 1.36 s

Wall time: 1.36 s

Now we can (optionally) compute the energy thresholds for the analysis, acoording to different methods. Here we choose the energy where the effective area drops below 10% of the maximum:

[13]:

# Add a condition on correct energy range in case it is not set by default

extraction.compute_energy_threshold(method_lo="area_max", area_percent_lo=10.0)

/Users/adonath/github/adonath/gammapy/gammapy/utils/interpolation.py:159: Warning: Interpolated values reached float32 precision limit

"Interpolated values reached float32 precision limit", Warning

Let’s take a look at the datasets, we just extracted:

[14]:

# Requires IPython widgets

# extraction.spectrum_observations.peek()

extraction.spectrum_observations[0].peek()

Finally you can write the extrated datasets to disk using the OGIP format (PHA, ARF, RMF, BKG, see here for details):

[15]:

# ANALYSIS_DIR = "crab_analysis"

# extraction.write(outdir=ANALYSIS_DIR, overwrite=True)

If you want to read back the datasets from disk you can use:

[16]:

# datasets = []

# for obs_id in obs_ids:

# filename = ANALYSIS_DIR + "/ogip_data/pha_obs{}.fits".format(obs_id)

# datasets.append(SpectrumDatasetOnOff.from_ogip_files(filename))

Fit spectrum¶

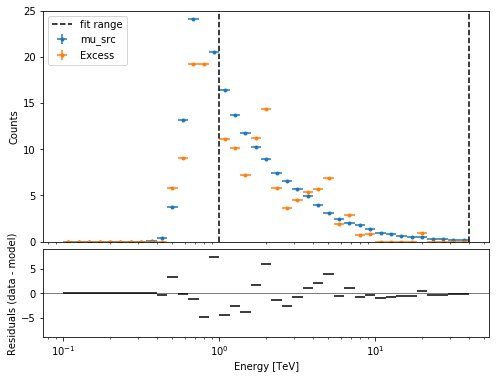

Now we’ll fit a global model to the spectrum. First we do a joint likelihood fit to all observations. If you want to stack the observations see below. We will also produce a debug plot in order to show how the global fit matches one of the individual observations.

[17]:

model = PowerLawSpectralModel(

index=2, amplitude=2e-11 * u.Unit("cm-2 s-1 TeV-1"), reference=1 * u.TeV

)

datasets_joint = extraction.spectrum_observations

for dataset in datasets_joint:

dataset.model = model

fit_joint = Fit(datasets_joint)

result_joint = fit_joint.run()

# we make a copy here to compare it later

model_best_joint = model.copy()

model_best_joint.parameters.covariance = result_joint.parameters.covariance

[18]:

print(result_joint)

OptimizeResult

backend : minuit

method : minuit

success : True

message : Optimization terminated successfully.

nfev : 47

total stat : 113.85

[19]:

plt.figure(figsize=(8, 6))

ax_spectrum, ax_residual = datasets_joint[0].plot_fit()

ax_spectrum.set_ylim(0, 25)

[19]:

(0, 25)

Compute Flux Points¶

To round up our analysis we can compute flux points by fitting the norm of the global model in energy bands. We’ll use a fixed energy binning for now:

[20]:

e_min, e_max = 0.7, 30

e_edges = np.logspace(np.log10(e_min), np.log10(e_max), 11) * u.TeV

Now we create an instance of the FluxPointsEstimator, by passing the dataset and the energy binning:

[21]:

fpe = FluxPointsEstimator(datasets=datasets_joint, e_edges=e_edges)

flux_points = fpe.run()

Here is a the table of the resulting flux points:

[22]:

flux_points.table_formatted

[22]:

| e_ref | e_min | e_max | ref_dnde | ref_flux | ref_eflux | ref_e2dnde | norm | loglike | norm_err | counts [4] | norm_errp | norm_errn | norm_ul | sqrt_ts | ts | norm_scan [11] | dloglike_scan [11] | dnde | dnde_ul | dnde_err | dnde_errp | dnde_errn |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| TeV | TeV | TeV | 1 / (cm2 s TeV) | 1 / (cm2 s) | TeV / (cm2 s) | TeV / (cm2 s) | 1 / (cm2 s TeV) | 1 / (cm2 s TeV) | 1 / (cm2 s TeV) | 1 / (cm2 s TeV) | 1 / (cm2 s TeV) | |||||||||||

| float64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 | int64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 | float64 |

| 0.859 | 0.737 | 1.002 | 4.210e-11 | 1.123e-11 | 9.564e-12 | 3.108e-11 | 0.875 | 13.920 | 0.118 | 0 .. 0 | 0.123 | 0.113 | 1.130 | 13.866 | 192.263 | 0.200 .. 5.000 | 82.45006199452813 .. 359.51821699218476 | 3.683e-11 | 4.759e-11 | 4.959e-12 | 5.164e-12 | 4.758e-12 |

| 1.261 | 1.002 | 1.588 | 1.530e-11 | 9.108e-12 | 1.126e-11 | 2.435e-11 | 0.944 | 12.766 | 0.088 | 31 .. 36 | 0.091 | 0.085 | 1.131 | 20.509 | 420.606 | 0.200 .. 5.000 | 168.4105202093644 .. 644.9695637434634 | 1.444e-11 | 1.731e-11 | 1.347e-12 | 1.396e-12 | 1.300e-12 |

| 1.852 | 1.588 | 2.160 | 5.561e-12 | 3.198e-12 | 5.871e-12 | 1.908e-11 | 1.176 | 9.888 | 0.147 | 27 .. 12 | 0.153 | 0.141 | 1.493 | 15.288 | 233.730 | 0.200 .. 5.000 | 114.5510226385427 .. 250.19524243046604 | 6.539e-12 | 8.303e-12 | 8.164e-13 | 8.497e-13 | 7.840e-13 |

| 2.518 | 2.160 | 2.936 | 2.475e-12 | 1.935e-12 | 4.829e-12 | 1.569e-11 | 1.240 | 9.208 | 0.175 | 10 .. 11 | 0.184 | 0.167 | 1.623 | 13.944 | 194.437 | 0.200 .. 5.000 | 95.99339781990182 .. 177.9901721011462 | 3.068e-12 | 4.017e-12 | 4.338e-13 | 4.548e-13 | 4.136e-13 |

| 3.697 | 2.936 | 4.656 | 8.993e-13 | 1.569e-12 | 5.687e-12 | 1.230e-11 | 0.946 | 14.894 | 0.159 | 17 .. 11 | 0.168 | 0.151 | 1.299 | 10.705 | 114.595 | 0.200 .. 5.000 | 60.74745714205392 .. 211.27686078865872 | 8.508e-13 | 1.168e-12 | 1.433e-13 | 1.511e-13 | 1.357e-13 |

| 5.429 | 4.656 | 6.330 | 3.269e-13 | 5.510e-13 | 2.964e-12 | 9.633e-12 | 1.156 | 8.758 | 0.271 | 9 .. 5 | 0.291 | 0.251 | 1.781 | 8.141 | 66.272 | 0.200 .. 5.000 | 38.67728017805366 .. 78.6380202729781 | 3.780e-13 | 5.823e-13 | 8.851e-14 | 9.527e-14 | 8.203e-14 |

| 7.971 | 6.330 | 10.037 | 1.188e-13 | 4.468e-13 | 3.491e-12 | 7.547e-12 | 1.074 | 12.420 | 0.283 | 5 .. 4 | 0.308 | 0.260 | 1.736 | 6.855 | 46.991 | 0.200 .. 5.000 | 33.38530405289388 .. 76.72079410711868 | 1.276e-13 | 2.062e-13 | 3.365e-14 | 3.654e-14 | 3.092e-14 |

| 11.703 | 10.037 | 13.647 | 4.317e-14 | 1.569e-13 | 1.820e-12 | 5.913e-12 | 0.945 | 10.501 | 0.450 | 0 .. 1 | 0.513 | 0.392 | 2.102 | 3.369 | 11.349 | 0.200 .. 5.000 | 15.707789721282893 .. 36.25710212455214 | 4.079e-14 | 9.076e-14 | 1.943e-14 | 2.214e-14 | 1.693e-14 |

| 17.183 | 13.647 | 21.636 | 1.569e-14 | 1.272e-13 | 2.143e-12 | 4.633e-12 | 0.329 | 7.230 | 0.298 | 1 .. 0 | 0.379 | 0.329 | 1.256 | 0.940 | 0.884 | 0.200 .. 5.000 | 7.444575932216505 .. 40.4292070718263 | 5.157e-15 | 1.971e-14 | 4.682e-15 | 5.950e-15 | 5.157e-15 |

| 25.229 | 21.636 | 29.419 | 5.702e-15 | 4.467e-14 | 1.117e-12 | 3.630e-12 | 0.000 | 0.524 | 0.001 | 0 .. 0 | 0.299 | 0.000 | 1.196 | 0.002 | 0.000 | 0.200 .. 5.000 | 1.1929670228175648 .. 17.25147513060675 | 1.018e-20 | 6.818e-15 | 8.331e-18 | 1.704e-15 | 1.018e-20 |

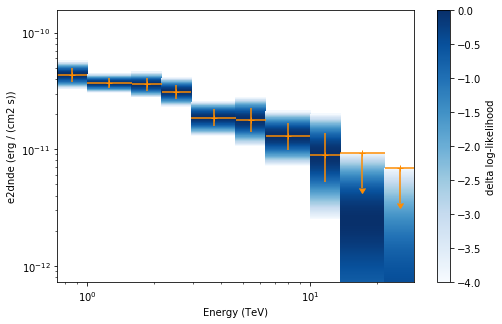

Now we plot the flux points and their likelihood profiles. For the plotting of upper limits we choose a threshold of TS < 4.

[23]:

plt.figure(figsize=(8, 5))

flux_points.table["is_ul"] = flux_points.table["ts"] < 4

ax = flux_points.plot(

energy_power=2, flux_unit="erg-1 cm-2 s-1", color="darkorange"

)

flux_points.to_sed_type("e2dnde").plot_likelihood(ax=ax)

[23]:

<matplotlib.axes._subplots.AxesSubplot at 0x11854c908>

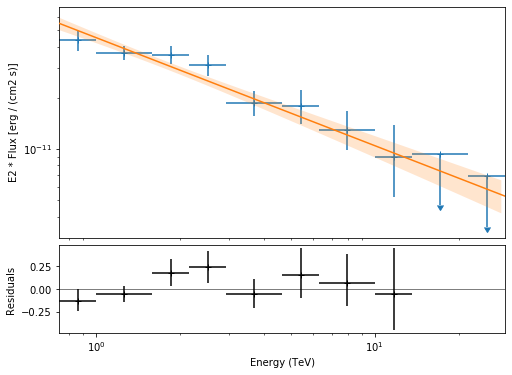

The final plot with the best fit model, flux points and residuals can be quickly made like this:

[24]:

flux_points_dataset = FluxPointsDataset(

data=flux_points, model=model_best_joint

)

[25]:

plt.figure(figsize=(8, 6))

flux_points_dataset.peek();

Stack observations¶

And alternative approach to fitting the spectrum is stacking all observations first and the fitting a model. For this we first stack the individual datasets:

[26]:

dataset_stacked = Datasets(datasets_joint).stack_reduce()

Again we set the model on the dataset we would like to fit (in this case it’s only a singel one) and pass it to the Fit object:

[27]:

dataset_stacked.model = model

stacked_fit = Fit([dataset_stacked])

result_stacked = stacked_fit.run()

# make a copy to compare later

model_best_stacked = model.copy()

model_best_stacked.parameters.covariance = result_stacked.parameters.covariance

[28]:

print(result_stacked)

OptimizeResult

backend : minuit

method : minuit

success : True

message : Optimization terminated successfully.

nfev : 35

total stat : 29.38

[29]:

model_best_joint.parameters.to_table()

[29]:

| name | value | error | unit | min | max | frozen |

|---|---|---|---|---|---|---|

| str9 | float64 | float64 | str14 | float64 | float64 | bool |

| index | 2.635e+00 | 7.617e-02 | nan | nan | False | |

| amplitude | 2.822e-11 | 1.894e-12 | cm-2 s-1 TeV-1 | nan | nan | False |

| reference | 1.000e+00 | 0.000e+00 | TeV | nan | nan | True |

[30]:

model_best_stacked.parameters.to_table()

[30]:

| name | value | error | unit | min | max | frozen |

|---|---|---|---|---|---|---|

| str9 | float64 | float64 | str14 | float64 | float64 | bool |

| index | 2.637e+00 | 7.619e-02 | nan | nan | False | |

| amplitude | 2.825e-11 | 1.895e-12 | cm-2 s-1 TeV-1 | nan | nan | False |

| reference | 1.000e+00 | 0.000e+00 | TeV | nan | nan | True |

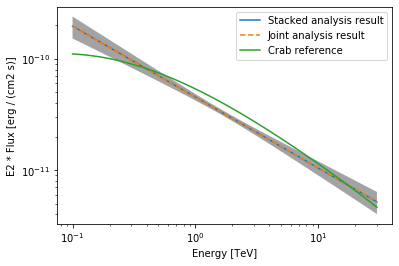

Finally, we compare the results of our stacked analysis to a previously published Crab Nebula Spectrum for reference. This is available in gammapy.spectrum.

[31]:

plot_kwargs = {

"energy_range": [0.1, 30] * u.TeV,

"energy_power": 2,

"flux_unit": "erg-1 cm-2 s-1",

}

# plot stacked model

model_best_stacked.plot(**plot_kwargs, label="Stacked analysis result")

model_best_stacked.plot_error(**plot_kwargs)

# plot joint model

model_best_joint.plot(**plot_kwargs, label="Joint analysis result", ls="--")

model_best_joint.plot_error(**plot_kwargs)

create_crab_spectral_model().plot(**plot_kwargs, label="Crab reference")

plt.legend()

[31]:

<matplotlib.legend.Legend at 0x11b6ccb00>

Exercises¶

Now you have learned the basics of a spectral analysis with Gammapy. To practice you can continue with the following exercises:

- Fit a different spectral model to the data. You could try e.g. an

ExpCutoffPowerLawSpectralModelorLogParabolaSpectralModelmodel. - Compute flux points for the stacked dataset.

- Create a

FluxPointsDatasetwith the flux points you have computed for the stacked dataset and fit the flux points again with obe of the spectral models. How does the result compare to the best fit model, that was directly fitted to the counts data?

[ ]: